What changes can be expected on July 1st, 2026 following the reform of designs and models?

Introduction

The Implementing Regulation (EU) 2026/138 will become applicable as from July 1st, 2026. This text completes the modernisation of European design law initiated by the substantive reforms that came into force on May 1st, 2025.

Whereas the previous reform addressed the substantive provisions, this new text profoundly transforms the procedural rules governing the filing of design applications before the European Union Intellectual Property Office (EUIPO). This evolution responds to a need that practitioners have identified for several years: the inadequacy of graphic representation rules to current technological realities.

A structural procedural reform brought by the new Implementing Regulation on designs

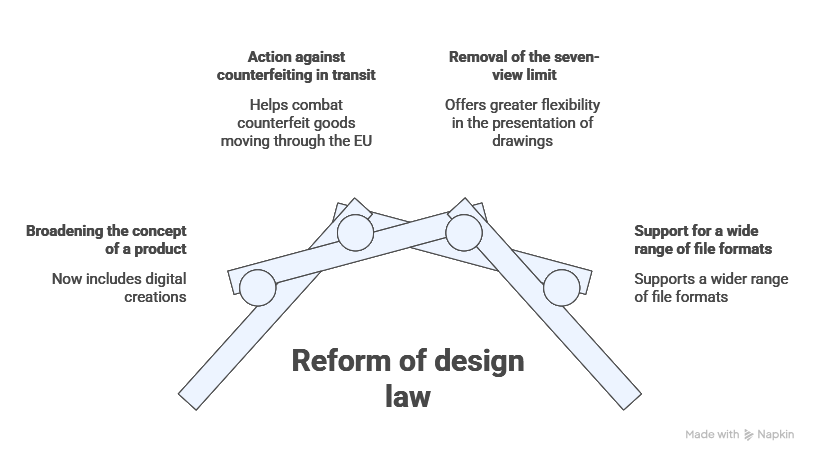

The reform of European design law unfolded in two successive stages. Regulation (EU) 2024/2822, which became applicable on May 1st, 2025, overhauled the substantive framework by redefining several key concepts. Several concepts have been redefined. In particular, the concept of “product” has notably been broadened to encompass digital creations, including interfaces, animations and graphic elements. Rights holders have also been granted the ability to take action against counterfeit goods merely transiting through the territory of the European Union. New exceptions to the rights conferred on holders have been introduced, new deadlines and fees have been established, and a repair clause has been provided for spare parts of complex products.

For further information on this reform, we invite you to read our article on the subject.

In a second stage, the Implementing Regulation 2026/138 adapts the procedural rules of filing to these new realities. This adjustment rests on a finding made by the European Commission: the evolution of visualisation technologies and the emergence of non-physical products require representation formats more sophisticated than those allowed under the previous framework. The Regulation thus implements two structural changes that should command the attention of applicants.

Without claiming to be exhaustive, the following developments focus on three significant contributions for applicants: the removal of the seven-view limit, the acceptance of new representation formats, and the easing of the regime governing multiple applications for designs.

The end of the seven-view limit: a decisive step forward in the representation of creations

-

A constraint no longer suited to complex creations

Until the entry into force of the new Regulation, any application for the registration of a community design could not contain more than seven graphic or photographic representations per design filed. This constraint, relevant at the time it was adopted, had become a real impediment for applicants confronted with complex creations.

How to represent, in seven static views, an animated user interface comprising several successive states, an articulated product adopting different positions of use, or an object whose geometry can only be apprehended through a multiplicity of angles? Applicants were regularly compelled to make difficult trade-offs, sacrificing the clarity of certain features in favour of others deemed more important.

-

A new freedom, framed by the requirement of consistency between representations

Regulation 2026/138 removes the previous strict limit of seven views. Applicants will henceforth enjoy an extended freedom to illustrate all the visual features being claimed. This evolution strengthens the legal certainty of the rightholder by enabling a more precise definition of the scope of protection.

A technical reservation nonetheless remains: it is likely that the EUIPO will maintain a technical ceiling within its online filing system, similar to the UKIPO approach of capping electronic filings at ten views. The precise terms will be set out in the Office’s forthcoming guidelines.

Above all, the Regulation specifies that the subject matter of the design claimed shall be determined by the combination of all the visual features resulting from all the representations filed. Perfect consistency between all views submitted thus becomes imperative, under penalty of objection from the examiner, or even loss of rights over certain distinctive elements.

The acceptance of new representation formats: videos, animations and CAD files

-

Towards a dynamic and digital representation of creations

As a logical complement to the abolition of the seven-view limit, the new Regulation enshrines the acceptance of considerably broadened representation formats. Applicants may now file:

- static representations (drawings, photographs);

- dynamic and animated representations (videos, animated sequences);

- computer-generated images;

- computer-modelling files, in particular CAD (Computer-Aided Design) formats.

This evolution constitutes a decisive step forward for designers of animated graphical user interfaces, XR/VR environments and exclusively digital products, the protection of which fitted poorly within the static representations imposed until now.

-

Heightened vigilance regarding the elements excluded from protection

The question of disclaimed features, traditionally indicated by dotted lines or shaded areas in two-dimensional views, remains to be clarified in the context of video files and 3D modelling. How is one to indicate, within an animated sequence, that a given element does not form part of the protection being claimed? Operational guidelines from the EUIPO are awaited on this point.

In this respect, recent case law, in particular the CJEU judgment in Ferrari of 28 October 2021 (C-123/20), reminds us that the scope of protection conferred by a community design depends closely on the precision with which its features are identified in the application for registration. The new formats offer, in this regard, fresh opportunities, but also fresh risks.

The easing of requirements for multiple applications

The reform also modifies the regime governing multiple applications for European Union designs. Until now, several designs could be included in a single application provided that the products concerned belonged to the same class of the Locarno Classification. This requirement could limit the practical value of multiple applications, particularly where the applicant wished to protect, in a single filing, a product range, accessories or variants falling within different categories.

As from 1 July 2026, this single-class requirement will be abolished. Applicants will therefore be able to include, in a single application, designs applied to products falling within different classes, which should facilitate the management of certain complex filings. This new flexibility will nevertheless remain subject to limits: a multiple application may not contain more than fifty designs.

This added flexibility will need to be factored into filing strategies. On the one hand, it will make it possible to streamline procedures, group together related creations and simplify the administrative management of portfolios. On the other hand, it will be accompanied by a change in the fee structure, with a flat fee for each additional design. In practice, multiple applications will therefore remain a useful tool, but they will need to be assessed on a case-by-case basis, depending on the number of designs to be protected, their commercial coherence and the overall cost of the operation.

Strategic levers to activate in order to take advantage of the new framework

-

Deferring or anticipating filing: a case-by-case assessment

For creations whose representation would substantially benefit from the new formats, deferral of filing until July 1st, 2026 deserves consideration. This strategy is particularly compelling for:

- animated graphical user interfaces (GUIs);

- articulated products or products with variable geometry;

- creations intended for XR/VR environments;

- digital objects whose form evolves through interaction.

Conversely, where establishing a priority date prior to July 1st, 2026 is strategically necessary, typically in cases of imminent risk of disclosure by a third party, or where immediate enforcement is required, an intermediate solution warrants consideration: filing an initial application in still images and then claiming the priority of that filing in a new application made after July 1st, 2026, exploiting the new formats.

-

Auditing existing portfolios and adapting internal practices

Holders of existing design portfolios should identify those creations whose initial representation, constrained by the former rules, would benefit from new filings exploiting the now-permitted formats. Such a portfolio audit constitutes an essential prerequisite to any optimisation strategy.

Adapting internal processes is equally a key issue: the process for preparing representations, involving the various stakeholders such as designers, marketing teams or industrial property advisors must be prepared to deliver the new formats required, in strict compliance with the requirement of consistency between representations. Failing this, the very richness of the available means of representation mechanically increases the risks of inconsistency, and consequently of objections during examination.

Conclusion

No single tool, taken in isolation, secures the optimisation of a post-reform community design filing. The abolition of the seven-view limit offers an unprecedented freedom, but the acceptance of video and CAD formats imposes heightened rigour as to the consistency of representations. The strategic deferral of filings beyond July 1st, 2026 is justified only for certain creations and remains a matter to be balanced against the urgency of the competitive context. In practice, applicants who master their strategy will combine portfolio audit, representational engineering and temporal arbitrage.

Dreyfus law firm assists its clients in the management of complex intellectual property matters, providing tailored advice and comprehensive operational support for the full protection of intellectual property assets.

Dreyfus law firm with the support of the entire Dreyfus team.

Q&A

1. Can already-registered Community designs be supplemented with new views after July 1st, 2026?

No. Implementing Regulation 2026/138 does not provide for any mechanism allowing the retroactive supplementation of representations for existing registrations.

2. What are the consequences for infringement actions pending on July 1st, 2026?

Infringement actions brought on the basis of Community designs registered under the former regime continue to be assessed by reference to the representations actually filed.

3. Does a richer representation allow for broader protection?

Not necessarily. The addition of views, animations or digital formats should not be seen as a way to artificially broaden the scope of protection. These elements primarily serve to better identify the design being claimed. The more detailed the representation, the more it may also contribute to precisely defining the protected subject matter. The key issue is therefore to select formats that enhance the clarity of the filing without creating unnecessary limitations.

4. What is the principal risk associated with the use of multiple representation formats?

The main risk lies in inconsistencies between the different representations. The Regulation specifies that the protected subject matter is determined by the combination of all visual features. Any divergence between a photographic view, a video or a CAD file may give rise to an objection from the examiner, or even to a loss of rights over certain elements. Rigorous verification of inter-representation consistency is imperative prior to any filing.

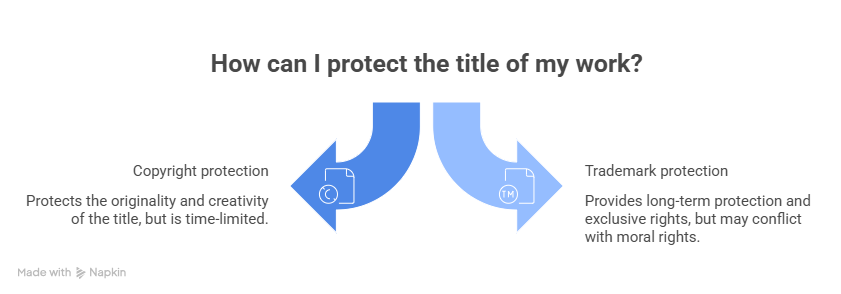

5. How should design protection and copyright protection be articulated for a digital creation?

An animated graphical user interface or a digital object may benefit from dual protection: through design law (subject to novelty and individual character) and through copyright (subject to originality). For more information on this dual protection, you may refer to the following article.

This publication is intended to provide general guidance and to highlight certain issues. It is not designed to apply to specific situations and does not constitute legal advice.